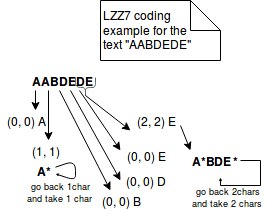

When snappy is compiled with _FORTIFY_SOURCE with musl libc. The commit 8bfb028 (Improve zippy decompression speed) introduce a crash For the latest version and other information, see. (Note that baddata.snappy are not intended as benchmarks they are used to verify correctness in the presence of corrupted data in the unit test.) Contact The testdata/ directory contains the files used by the microbenchmarks, which should provide a reasonably balanced starting point for benchmarking. If you want to change or optimize Snappy, please run the tests and benchmarks to verify you have not broken anything. zlib) and then a list of one or more file names on the command line. To benchmark using a given file, give the compression algorithm you want to test Snappy against (e.g. snappy_test_tool can benchmark Snappy against a few other compression libraries (zlib, LZO, LZF, and QuickLZ), if they were detected at configure time.

snappy_unittests contains unit tests, verifying correctness on your machine in various scenarios.snappy_benchmark contains microbenchmarks used to tune compression and decompression performance.You do not need them to use the compressor from your own library, but they are useful for Snappy development. When you compile Snappy, the following binaries are compiled in addition to the library itself. See the header file for more information. There are other interfaces that are more flexible in various ways, including support for custom (non-array) input sources. Where "input" and "output" are both instances of std::string. Snappy::Uncompress(input.data(), input.size(), &output) You need the CMake version specified in CMakeLists.txt or later to build: Performance optimizations, whether for 64-bit x86 or other platforms, are of course most welcome see "Contact", below. Snappy assumes little-endian throughout, and needs to byte-swap data in several places if running on a big-endian platform.Įxperience has shown that even heavily tuned code can be improved.On some platforms, these must be emulated with single-byte loads and stores, which is much slower. Snappy assumes unaligned 32 and 64-bit loads and stores are cheap.Snappy uses 64-bit operations in several places to process more data at once than would otherwise be possible.

Of course, compression ratio will vary significantly with the input.Īlthough Snappy should be fairly portable, it is primarily optimized for 64-bit x86-compatible processors, and may run slower in other environments. More sophisticated algorithms are capable of achieving yet higher compression rates, although usually at the expense of speed. Similar numbers for zlib in its fastest mode are 2.6-2.8x, 3-7x and 1.0x, respectively. Typical compression ratios (based on the benchmark suite) are about 1.5-1.7x for plain text, about 2-4x for HTML, and of course 1.0x for JPEGs, PNGs and other already-compressed data. LZO, LZF, QuickLZ, etc.) while achieving comparable compression ratios.

(These numbers are for the slowest inputs in our benchmark suite others are much faster.) In our tests, Snappy usually is faster than algorithms in the same class (e.g. On a single core of a Core i7 processor in 64-bit mode, it compresses at about 250 MB/sec or more and decompresses at about 500 MB/sec or more. Snappy has previously been called "Zippy" in some Google presentations and the like. For more information, see the included COPYING file.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed